Week 2026-09

In the latest issue, we look at what will hold copyright value once everything is AI-generated. @vlkodotnet

Week’s Highlight: AI Relicensing

Something interesting happened last week. A new version of the chardet library — version 7.0.0 — was released, promising better performance and speed. It was rewritten with AI assistance from scratch compared to version 6.0.0, while maintaining the same API.

The library’s biggest pain point had always been its LGPL license, inherited from the original Mozilla C++ code it was based on. After a complete rewrite, the authors decided to release it under the more widely accepted MIT license. Everything went smoothly — until the original author of the library stepped forward and challenged whether such a relicensing was even legally permissible.

We’ll likely encounter similar cases more and more often. Anyone can take a library’s interface and its unit tests, use AI to build a new implementation, and then publish it under a less restrictive license. In the past, that would have required an enormous amount of effort — but today, with AI tools, it’s achievable in a fraction of the time.

One could invoke the well-known Oracle v. Google case, where it was established that an API (or in this case, a library interface) cannot be subject to copyright protection. On the other hand, the U.S. Supreme Court has ruled that human creativity is a fundamental requirement for copyright, meaning AI-generated content cannot receive copyright protection.

I don’t have a definitive answer to what the right solution here is, but I do have one thought worth pondering. If you generate your code with AI, you will very likely lose copyright protection over it. And honestly — given how capable AI has become at helping with software development, I see no reason not to use it. So what will our authorial contribution actually be? Perhaps the prompts we give to AI. Those will turn out to be more important than we think. You might want to start keeping a history of your prompts and conversations, with timestamps in the filenames. A proper system for that would be very welcome. OpenAI sees an opportunity here too — which is why word is leaking out that they’re working on an alternative to GitHub.

MWC 2026

It’s been a while since I’ve seen a genuinely interesting mobile hardware innovation — not counting second screens, whether on foldables or as small informational displays on the back of a phone. Now, Xiaomi in collaboration with Leica has introduced a physically rotating ring that lets you control your phone’s camera.

The Honor Magic V6 is the first foldable you can wash with a high-pressure water gun — it meets the IP69 standard.

Honor also unveiled the Robot Phone, which hides a robotic camera with a gimbal inside — capable of tracking objects, for example.

Nothing didn’t announce a new flagship fourth-generation model — apparently there was no reason to. Instead, they introduced the 4A and 4A Pro. The Pro in particular looks like a phone you can carry without a case, thanks to its metal back panel.

Tecno unveiled a modular phone concept: a base handset paired with a collection of components that attach via magnets.

Lenovo put together an interesting lineup as well. Highlights include a foldable gaming console concept, their own take on the Surface tablet with a detachable keyboard, and much more.

Apple News

Apple had a busy week too. The biggest announcement was the MacBook Neo — a laptop powered by last year’s iPhone chip, 8 GB of RAM, priced at $600. No plastic in sight; it comes in a clean aluminum chassis. I’m curious to see how the benchmarks pan out and whether 8 GB of RAM is enough. If it is, this looks like an appealing product, especially for students.

Aimed primarily at enterprise customers, the iPhone 17e brings a new 5G modem and MagSafe compared to its predecessor.

MacBooks received an M5 update. What’s particularly interesting is that the M5 Pro and M5 Max share the same CPU core and differ only in the GPU. In other words, Apple is now assembling them similarly to AMD and recent Intel processors — combining different components onto a shared substrate.

Security Insights

Google’s Cloud Security team published a report outlining the threats we’ll be defending against in the coming year. Attackers are increasingly leveraging AI to automate attacks and social engineering. Prompt injection will be everywhere, and there’s a growing need to identify AI agents. Since AI agents are expected to be ubiquitous, distinguishing your own agents from adversarial ones will become a real challenge.

These days, all it takes to compromise your code is a single unsanitized input — especially when that input is passed into an LLM. In one recent case, it was a GitHub Issue title processed by Claude Code.

Privacy falls under security too. If you own Meta AI Smart Glasses, be mindful of when and where you wear them. You might unknowingly end up in a review program where contractors — located in Africa — are asked to evaluate whether the AI model in the glasses correctly classified what it saw.

BIZ Insights

Nvidia has committed $2 billion in future contracts to each of Lumentum and Coherent — two companies working on photonic technologies that reduce latency and power consumption while increasing data throughput in data centers. This isn’t a direct equity investment, but it secures Nvidia’s supply chain, and companies backed by such contracts have no trouble securing development loans.

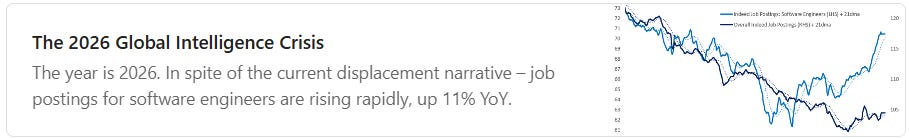

Here are two contrasting charts. The first shows a clear decline in job postings in the US tech sector — levels not seen this low since the dot-com bubble.

The second, however, shows that despite this decline, demand for software engineers is beginning to recover. There are several possible reasons:

companies are looking for people who can implement AI,

AI needs oversight, and that requires people,

AI is creating new jobs — though I’m quite skeptical of this one.

The adoption challenge is likely part of the picture, as Anthropic’s latest analysis also suggests. In theory, AI could cover a broad range of work tasks — but in practice, observed coverage remains partial. Currently, the highest task coverage is among software developers, at around 75%.

AI Insights

OpenAI released two models in quick succession. First came GPT-5.3 Instant, focused on improving everyday conversations.

Two days later, GPT-5.4 arrived, integrating GPT-5.4 Codex and tool use to close the gap with Opus 4.6. According to benchmarks, it managed to reach roughly the level of Opus 4.5 — still a solid result. Beyond coding improvements, the biggest gains are in working with documents like Word and Excel.

YuanLab AI has released what is currently the largest multimodal open-weight MoE model — Yuan 3.0 Ultra, with 1 trillion parameters. Its benchmark results are competitive, making it the current Chinese leader in this space.

Raycast — the team behind the popular app launcher — now has their own vibe-coding tool for mini apps. It’s called Glaze, and it lets you quickly build desktop applications for macOS and publish them directly to the Mac App Store.

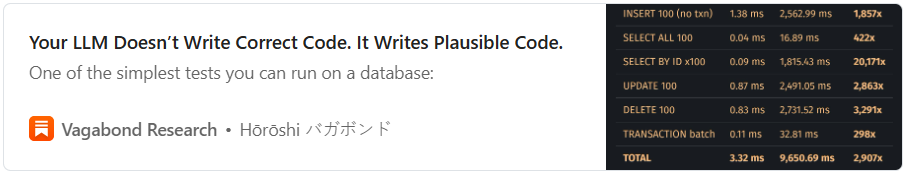

Finally, here’s another case study on unnecessary token burning. Someone used AI to convert SQLite to Rust (not the Turso/libsql rewrite — a separate project) and the results were about what you’d expect: poor performance and plenty of bugs.

.NET Insights

We sometimes assume that slapping async on a problem will fix it. But async doesn’t increase capacity — it’s just a queue. If the system behind the scenes can’t keep up, async only delays the problem. That’s why it’s important to monitor not just your system’s entry points, but its internal components as well.

Links Drop

Xbox revealed the codename for its next-generation console: Project Helix. It will use an AMD chip and operate as a hybrid system that can also function as a traditional PC. AI integration is included, of course.

Wondering where to host your VM for the best price-to-performance ratio? This benchmark will help.

Closing Visual

It looks like the classic XKCD Dependency comic — but this one is interactive.